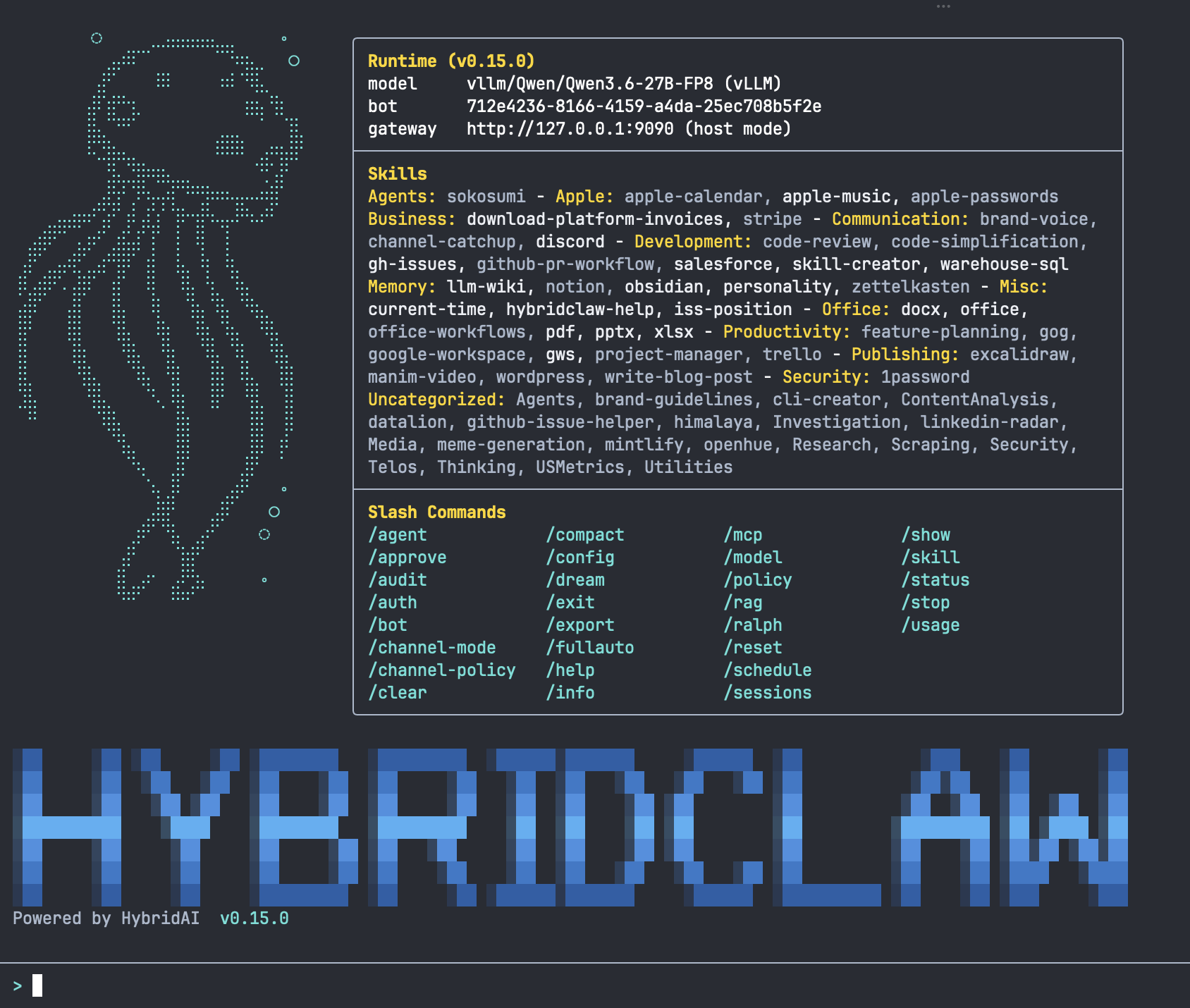

Composable agent skills

Skills: 35+ ready-made capabilities — plus your own

A HybridClaw skill is a discrete, evaluable, versionable unit of agent capability. Mix bundled skills with custom skills for your own processes.

What a skill is — and what it is not

A skill is not a function. A skill is a self-contained capability that promises an outcome ("hand invoice to DATEV", "answer ticket", "research a lead"). It contains a system prompt, tool permissions, expected inputs/outputs, test cases and eval criteria. A skill is auditable, versionable and small enough to be understood by a reviewer in one sitting.

Five properties that define a skill

Together these properties separate a production skill from an improvised prompt.

Discrete

Clearly bounded scope. "Research a lead" is a skill. "Do CRM and ERP and email" is not.

Evaluable

Every skill ships with test cases. A measurable success rate is the precondition for production.

Versioned

Skills are versioned like code. Rollbacks and A/B tests against old versions are possible.

Composable

Skills call other skills. 35 skills produce hundreds of more complex workflows.

Open source

Skills are readable Markdown + YAML. No black box, no vendor lock-in.

Skill categories we ship

The 35+ bundled skills cover the most common business workflows.

Finance

Invoice collection, VAT classification, DATEV/Lexware/SAP hand-off, cost approvals.

Sales

Lead research, CRM updates, follow-up drafts, pipeline reports.

Support

Ticket triage, knowledge retrieval, draft replies, escalation.

BI & analytics

Data questions, report generation, anomaly explanations, dashboard updates.

Operations

Browser workflows, status tracking, blocker escalation, RPA replacement.

IT & admin

System checks, log analysis, approval workflows, on-call triage.

Anatomy of a skill

A skill definition is made of these parts. Each can be extended.

- System prompt. Role, goal, personality, constraints in natural language.

- Tools. Which APIs, browser actions and DB queries the skill may use. Encrypted credentials are injected at runtime.

- Input/output schema. JSON schema or TypeScript types for expected inputs and outputs.

- Test cases. Concrete input/output pairs the skill is evaluated against on every build.

- Eval criteria. When is an answer correct? Example: a tax classification must match an exact ICD code.

- Approval rules. Which outcomes need human-in-the-loop sign-off? Example: amounts > 5000 EUR.

Skill safety and self-improvement

Skills are powerful: they drive real tools, write to real databases, spend real money. Four mechanisms keep that power controllable — and ensure skills get better over time instead of decaying.

Self-evaluation & self-improvement

Every skill grades its own trajectories against the test cases — on every run, not just on build. From recurring failure classes the skill drafts prompt or tool adjustments that a human owner can review and merge.

Skill health monitoring

A background process continuously checks eval drift, quality regressions, latency and cost trends per skill. On sudden degradations, skill owners and on-call get notified before customers notice.

Security scanner

Before every production deploy a skill runs through static analysis plus LLM review: prompt-injection risk, excessive tool permissions, dangerous output patterns. Skills with critical findings are blocked.

Sandbox execution

Skills with browser- or code-execution permissions run in isolated containers with their own filesystem, defined network egress policies and resource limits. Maximum power for the skill, clear blast-radius boundary.

Skill questions we hear often

How long does it take to build a custom skill? +

Simple skills (e.g. a new mail-routing workflow): 30 minutes to a few hours. More complex skills with their own tool set, evals and approval workflows: one to five days. Skills can be composed iteratively from existing ones.

Can we modify existing skills? +

Yes. Skills are versioned — fork the current version, adjust prompt, tools or evals, merge back. In Managed Cloud you can run your own skill variants in parallel with the default version.

What happens when a skill shows a bad eval score? +

By default the last stable version stays active. Skills below a configured score threshold automatically move to "needs review" status. Production deploys for such skills are blocked until an owner approves.

Is there a skill marketplace? +

The community already maintains a directory of open skills. A dedicated marketplace for enterprise skills (with signatures, audit reports and licensing) is on the roadmap for Managed Cloud.